In here I describe how to transform the coordinates of a given point in camera coordinate frame into body coordinate frame. I am still confused with the concept of vectors, matrices, coordinate systems and transformations. I feel I am lacking some intuition. I also realise I may not need a transformation at all given that I will not use the coordinates of the joints as features but instead use the differences (length and angles) between them — that way, transformation is irrelevant. Nevertheless, here’s what I will take note of. A few helpful references for matrix transformation is given under the background knowledge section.

Summary

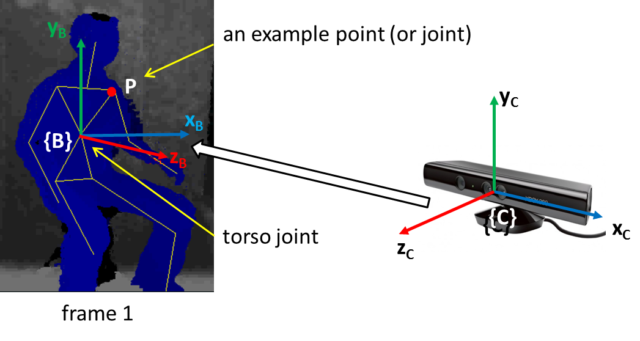

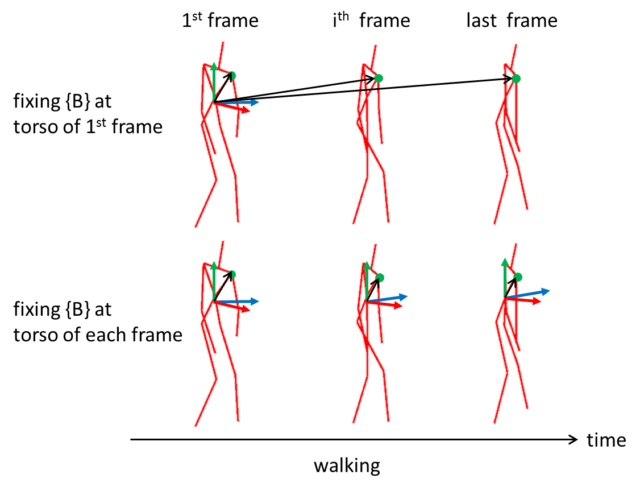

The idea is to transform the coordinate frame from camera {C} to the torso joint of the first frame {B}.

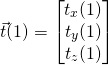

First obtain the following joint coordinates from the first frame of an activity instance captured from Kinect (i.e. in {C} ). Note I have chosen to define the body coordinate frame at the torso joint in first frame.

torso joint

(1)

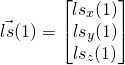

left shoulder joint

(2)

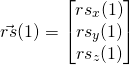

right shoulder joint

(3)

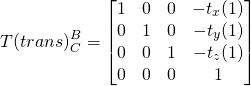

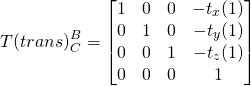

Second, form the transformation matrix (in homogeneous form, i.e. 4×4) to translate the origin from {B} to the origin of {C}. Here’s we use the position of the torso joint. Note the translation is the reverse of the torso joint vector. This translation effectively moves the position of {B} to {C}. Imagine moving the person in the image above to the center of the Kinect.

(4)

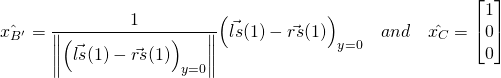

Third, obtain the unit vectors of the x-axes of two reference frames from the vectors of the left and right shoulder joints. For {B}, we assume the x-axis is along the shoulder pointing to the left of the person. Unit vector is computed from the difference between the two shoulder vectors normalized to unit length. For {C}, it is simply a unit length vector pointing in x-axis of {C}.

(5)

Forth, compute the rotation angle ![]() as the angle between the two unit x-axes vectors. We use the inverse cosine of the dot product of the two unit vectors to determine the angle between them, and treated the sign such that it will rotate

as the angle between the two unit x-axes vectors. We use the inverse cosine of the dot product of the two unit vectors to determine the angle between them, and treated the sign such that it will rotate ![]() into the orientation of

into the orientation of ![]() .

.

(6) ![]()

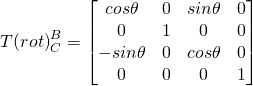

Fifth, form the homogeneous transformation matrix for rotation that would rotate ![]() into the orientation of

into the orientation of ![]() .

.

(7)

Sixth, compose the homogeneous transformation matrix ![]() ,

,

(8) ![]()

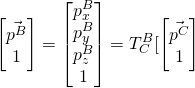

Finally, given a point P whose coordinates in {C} is given by

(9)

Pad the vector and compute its coordinates in {B} ,

(10)

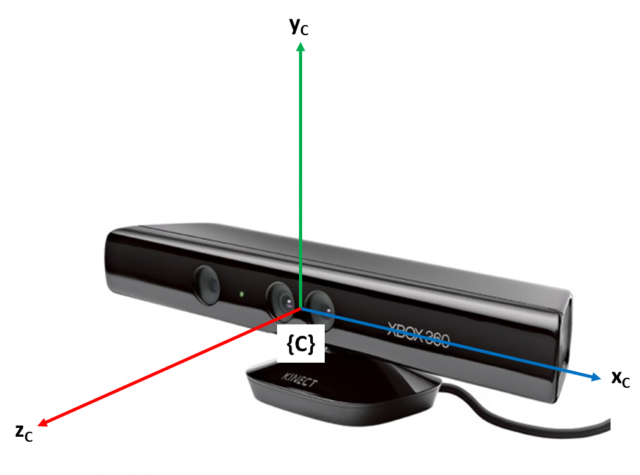

Details

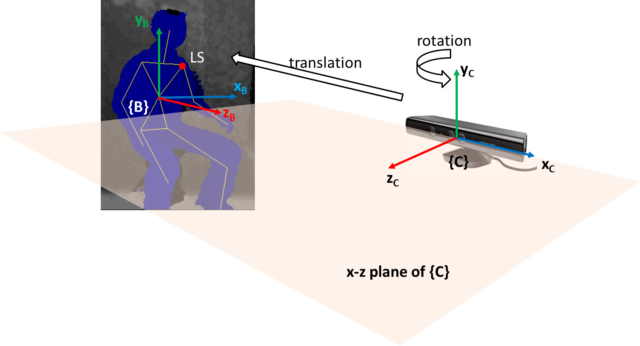

First, I note the coordinate frame of Kinect is right handed . (NOTE: I can’t confirm this info. Microsoft document says it is right handed, however my system with SimpleOpenni is left handed, i.e. x value increases toward right of sensor. Anyway, the following discussion remains valid. Only that if it was left handed, then the resulting local coordinate system will also be left handed. I also note OpenNI called our left as right and vice versa, i.e. our right hand is the left hand in OpenNI.) The origin is right at the center of its face. z-axis increases when moving away from the face; y-axis increases upward; x-axis increases to its left (viewer’s right). Lets call this camera coordinate frame {C} .

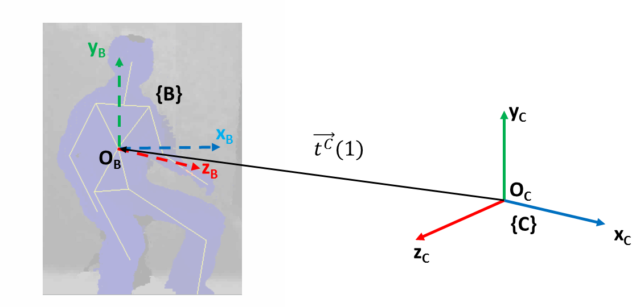

I want to define a body coordinate frame, lets call it {B} , on the detected human skeleton from Kinect API. I decided to place {B} at the torso joint of the first frame of an activity instance. Alternatively, I could have place {B} at the torso joint of each frame. By doing so, I would need to compute the transformation matrix in each frame and the location of the person would appear stationary; I would require additional feature to capture the movement with respect to the world. Note that an activity instance comprises series of frames of posture.

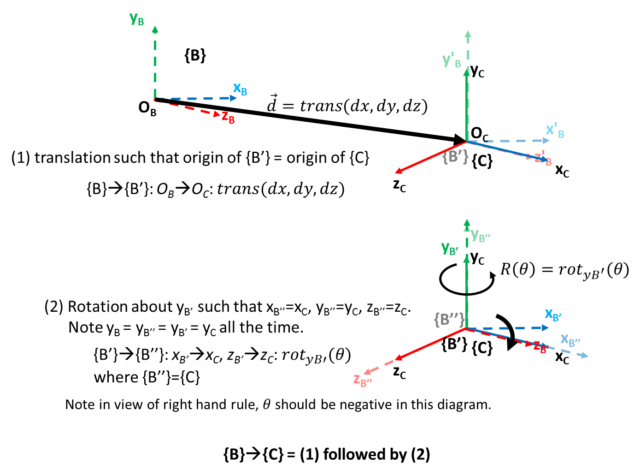

To simplify the transformation, I maintain the y-axis in {B} parallel to y-axis in {C} , i.e. pointing upward. Also, the x-z plane in {B} is parallel to that in {C} . In this way, the required transformation comprises (only) of a rotation around y-axis in {C} to align {B} such that its z-axis is pointing to the front of the person and a translation from origin of {C} , i.e. <0,0,0> to the torso joint position in the first frame.

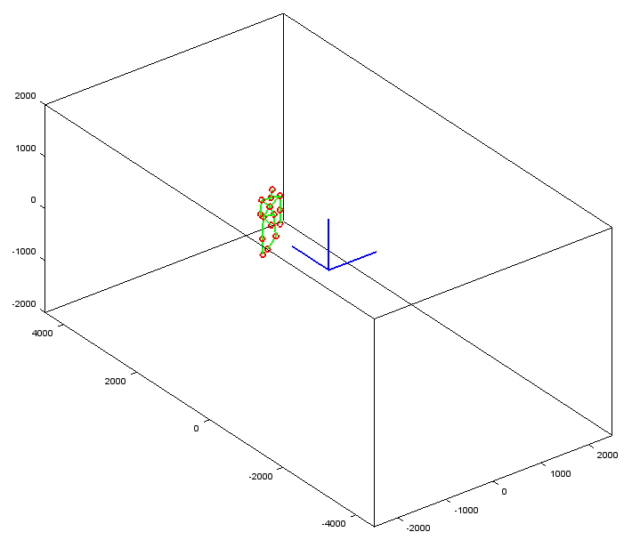

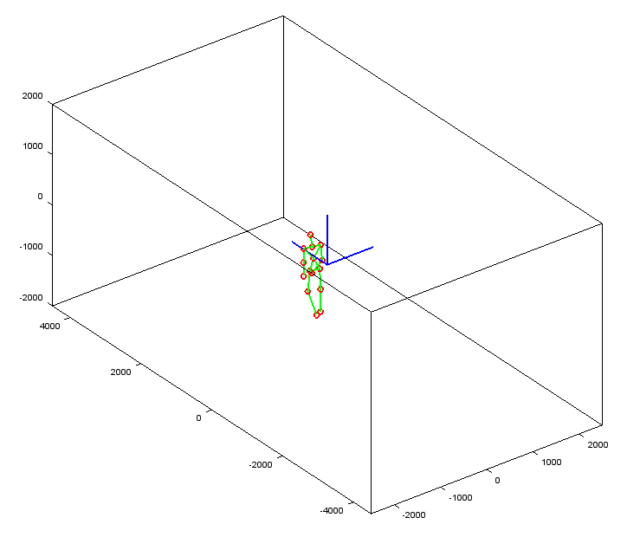

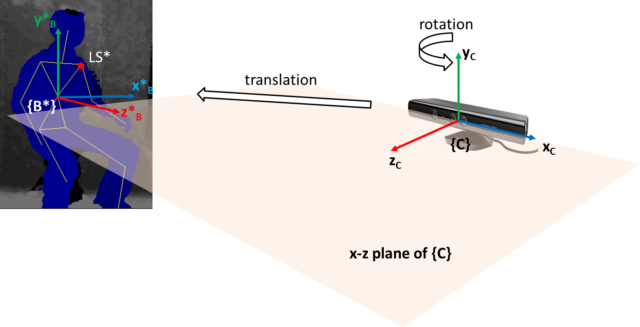

Note in the above two figures, the relative positions between the person and the camera are different, i.e. the camera is looking from different view points. However, the person is in same posture. Before any transformation (in {C} ), the coordinates of any joint (e.g. the left shoulder LS ) will be different in the two scenarios. Whereas if the coordinate frame is transformed to the body, {B} , the coordinates of ![]() and

and ![]() (these are the vectors from the origin of the reference frame to the point

(these are the vectors from the origin of the reference frame to the point LS and LS* respectively), for example, are the same. In this way, the coordinates are view-invariant and we can better recognise similar postures. In equations below, superscript is used to indicate the reference coordinate frame.

(11) ![]()

(12) ![]()

To transform the coordinates of a point in {C} , ![]() , to coordinates in

, to coordinates in {B} , ![]() , we need to determine the homogeneous transformation matrix ,

, we need to determine the homogeneous transformation matrix , ![]() such that:

such that:

(13) ![]()

where,

(14) ![]()

For example ![]() .

.

![]() is the required transformation to move

is the required transformation to move {B} such that it aligns with {C} . Note when labeling axes and components, I have used superscripts, e.g. ![]() is the x-axis of

is the x-axis of {C} ; ![]() is the x-component of vector

is the x-component of vector ![]() .

.

Note that, the sequence of operations can be reversed, i.e. rotation first then translation. In both ways, we should obtain the same ![]() , however the order of composing

, however the order of composing ![]() must be second operation multiply by first (2)*(1). Further, if we do the rotation first, we would be rotating around the y-axis of {C} since we are using the coordinates from {C}. This would not give the desired effect to align the body’s front to z-axis. I will do translation followed by rotation.

must be second operation multiply by first (2)*(1). Further, if we do the rotation first, we would be rotating around the y-axis of {C} since we are using the coordinates from {C}. This would not give the desired effect to align the body’s front to z-axis. I will do translation followed by rotation.

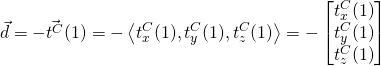

The translation, ![]() is simply the reverse of the torso joint vector

is simply the reverse of the torso joint vector ![]() in first frame. Note

in first frame. Note (1) is used to indicate frame 1 .

(15)

The translation can be expressed in homogeneous form,

(16)

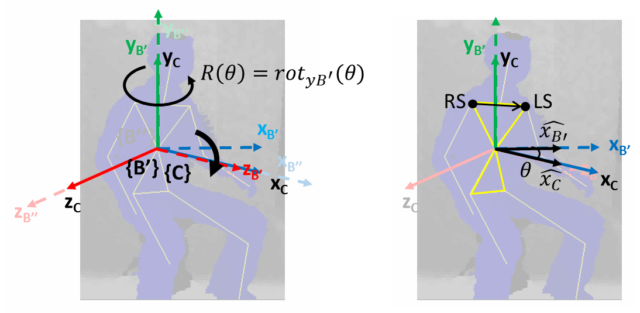

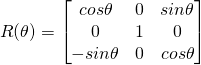

After the translation, the origin of {B} and {C} are aligned (y-axes are aligned). However, a rotation is required to align the other two axes. The rotation matrix around y-axis to move the x and z-axes (considered as vectors in this movement) in { B' } to align with corresponding axes in { C } (i.e. such that { B'' }={ C } ) is given by:

(17)

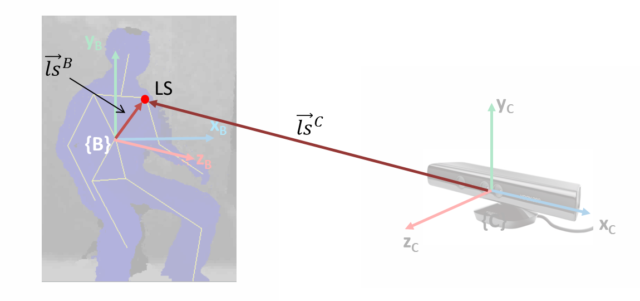

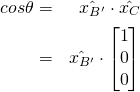

The following diagram shows the necessary vectors to determine ![]() . I basically use the vector connecting left and right shoulder joints to determine the direction of x-axis in

. I basically use the vector connecting left and right shoulder joints to determine the direction of x-axis in { B' } ( ![]() ) and then use dot product to computer the angle

) and then use dot product to computer the angle ![]() between it and the original x-axis in

between it and the original x-axis in { C } ( ![]() ).

).

![]() and

and ![]() are unit vectors in the direction of

are unit vectors in the direction of ![]() and

and ![]() axes respectively. The value of

axes respectively. The value of ![]() can be determined using dot product and the criteria that

can be determined using dot product and the criteria that ![]() stays on (parallel to) the x-z plane. Since it is expected that the data for computation will be with reference to

stays on (parallel to) the x-z plane. Since it is expected that the data for computation will be with reference to {C} (fromKinect), all values are taken with reference to {C} .

(18) ![]()

Since, ![]() and

and ![]() are unit length, and

are unit length, and  with reference to

with reference to {C} , we have

(19)

Note the use of cosine makes the sign of ![]() irrelevant. However, the sign will be needed eventually when computing the sines. To determine

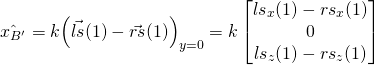

irrelevant. However, the sign will be needed eventually when computing the sines. To determine ![]() , we note

, we note ![]() is parallel to

is parallel to ![]() (

(![]() and

and ![]() are vectors from origin to

are vectors from origin to LS and RS respectively). Further, when parallel to x-z plane, the y-component is made zero.

(20)

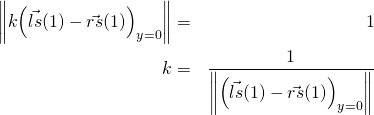

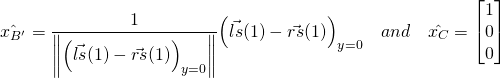

To find k , we note ![]() is unit vector, i.e. has unit magnitude.

is unit vector, i.e. has unit magnitude.

(21)

From (19), (20) and (21), we have

(22)

(23) ![]()

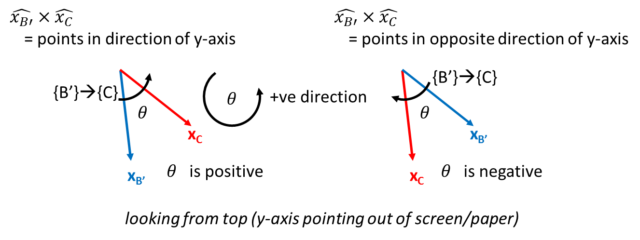

Note the two vectors in (23) are operated by dot product. In (23), I do not have the sign of ![]() . To determine, the sign of

. To determine, the sign of ![]() , I use the cross product of

, I use the cross product of ![]() and

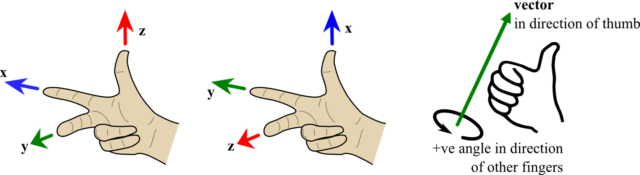

and ![]() . Note right handed rule being applied to determine positive direction, as well as the resultant vector of cross product.

. Note right handed rule being applied to determine positive direction, as well as the resultant vector of cross product.

From the above diagram, if the result of the cross product is in the direction of y-axis, ![]() is positive, otherwise

is positive, otherwise ![]() is negative. Therefore,

is negative. Therefore, ![]() has the sign of the y-component of

has the sign of the y-component of ![]() . Note also the x and z components of the cross-product are expected to be zero (since it is along y-axis).

. Note also the x and z components of the cross-product are expected to be zero (since it is along y-axis).

(24) ![]()

With (15) and (17), we can transform the the coordinates of a point in {C} , ![]() , to coordinates in

, to coordinates in {B} , ![]() ,

,

(25) ![]()

where,

(26) ![]()

and ![]() is given in (24) and,

is given in (24) and, ![]() ,

, ![]() and

and ![]() are the coordinates of the torso joint in first frame. To use the 4×4 transformation matrix, the coordinates of a vector in

are the coordinates of the torso joint in first frame. To use the 4×4 transformation matrix, the coordinates of a vector in {C} must be padded:

(27)

The resultant vector will be 4×1 and the padded 4th element can be removed to obtain a 3×1 vector (just the x, y and z components). Note all coordinates are reference to {C}.

Octave implementation

Here’s the Octave implementation for the transformation, including codes to transform all examples (instances) in a given file.

Background knowledge

I found the note on homogeneous transform by Jennifer Kay from Rowan University being most readable for novice like myself. Jennifer uses good number of illustrations and simple examples to explain the concepts of coordinate transformation and its application in forward kinematic in robotic arm. There are a number of things I found difficult to grasp when reading her note, here are a few:

- The arrangement of the axes are different from my familiar Kinect coordinate system, i.e. y-axis is pointing upward; however, this is normal as different systems adopt different axes arrangement.

- The explanation of right hand rule seems a little complicated; it is sufficient to use the following diagrams to explain right hand rule to determine the relative position of the axes as well as the positive rotation direction.

Right hand rule: note there is no consistency in assignment of axes to fingers, however it is the order that matters. - The elements used in the homogeneous transformation matrix are not given explanation, i.e. “this are the formulas, and trust it”. Likewise, the note started by using a four coordinates to represent a point, which was difficult to appreciate the extra “weight” component. It helps to ask ourselves to not be curious, at this point, about how those cosines, sines and their arrangement in a 4×4 matrix come from. Just take it for now as we can then clearly see that they work. In that sense, we can quickly use it in our application. We can then probe further to understand homogeneous transformation .

[gview file=”http://elvis.rowan.edu/~kay/papers/kinematics.pdf”]

Jennifer’s note is based on the tutorial ” Essential Kinematics for Autonomous Vehicles ” (this is later version) by Alonzo Kelly from CMU, which I found Jennifer has done an excellent job to explain clearly. To probe further on how homogeneous transformation matrix is derived, I found the note on Kown3D quite easy to digest.

Kown3D also explains the difference between rotating axis (coordinate frame rotation) and rotating a vector (no change to coordinate frame) , which is an important concept to grasp to under the concept of transformation.

Still, I haven’t fully understand everything explained. One the problem was my lack of understanding or intuition of dot production and vectors. The videos on Khan Academy explain some of these concepts well.

Here’s explanation of cross product.

Files

TODO**: Instructions on how to use.

- Octave file transformAllToFirstFrameF.m (function)

- Files to draw the skeleton before and after transformation (takes the Xsamp or XsampT files): drawSkeleton.m and drawSkeletonForOneExample.m (called by drawSkeleton.m)